Nvidia has officially unveiled its first Pascal-based graphics processor, but it's not aiming at consumers with the first implementation: the Tesla P100 is firmly for high-performance computing applications.

Entering Nvidia's HPC accelerator family, the Tesla P100 is the first outing for the company's next-generation 16nm Pascal graphics processor design - meaning it offers an advance glimpse at what consumers can expect, albeit in a money-no-object card designed for GPU-accelerated computation rather than gaming or workstation graphics. Based on 3D FinFET transistors, the Tesla P100's GPU is paired with 16GB of ECC High Bandwidth Memory 2.0 (HBM 2.0) dedicated RAM packaged as chip-on-wafer-on-substrate, or CoWoS, offering 720GB/s of bandwidth and boasting a unified architecture. The GPU itself also packs 14MB of shared register files with 80TB/s of aggregate throughput alongside 4MB of L2 cache.

In short: the Tesla P100 is a beast. Nvidia's internal testing claims that the 15.3-billion transistor GPU, which measures a hefty 610mm², can manage 21.2 teraflops of compute performance for half-precision floating-point operations, dropping to 10.6 teraflops for single-precision and a still-impressive 5.3 teraflops for double-precision operations. Sadly, integrating the device into servers is going to take a little while: Nvidia claims that the PCI Express bus cannot provide enough bandwidth to keep the card fed, so the Tesla P100 instead uses the company's proprietary NVLink interconnect to talk to the host system at 160GB/s bidirectioanlly.

Compared to the Maxwell-based Tesla M40, the Tesla P100's specifications are considerably raised: the new design features up to 60 SM processing blocks, with the P100 boasting 56 of that possible total active and available, over the M40's 24. Clock-speed, too, has been boosted from 948MHz to 1,328MHz, the number of texture units hiked to 224 from 192, and the result is a boost in performance from 0.2 teraflops to 5.3 teraflops in 64-bit floating-point operations. Interestingly, despite having nearly double the number of transistors the Pascal P100 draws just 50W more power at a thermal design profile (TDP) of 300W.

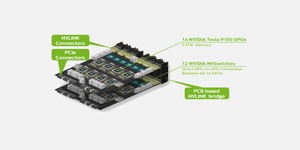

Sadly, while Nvidia was keen to show off the Pascal device, it is less keen to offer firm availability and pricing. It has already built an example implementation, however: the DGX-1 supercomputer, which packs eight Tesla P100 boards for a total of 170 teraflops of compute performance and is priced at $129,000.

More information is available from the official website.

Entering Nvidia's HPC accelerator family, the Tesla P100 is the first outing for the company's next-generation 16nm Pascal graphics processor design - meaning it offers an advance glimpse at what consumers can expect, albeit in a money-no-object card designed for GPU-accelerated computation rather than gaming or workstation graphics. Based on 3D FinFET transistors, the Tesla P100's GPU is paired with 16GB of ECC High Bandwidth Memory 2.0 (HBM 2.0) dedicated RAM packaged as chip-on-wafer-on-substrate, or CoWoS, offering 720GB/s of bandwidth and boasting a unified architecture. The GPU itself also packs 14MB of shared register files with 80TB/s of aggregate throughput alongside 4MB of L2 cache.

In short: the Tesla P100 is a beast. Nvidia's internal testing claims that the 15.3-billion transistor GPU, which measures a hefty 610mm², can manage 21.2 teraflops of compute performance for half-precision floating-point operations, dropping to 10.6 teraflops for single-precision and a still-impressive 5.3 teraflops for double-precision operations. Sadly, integrating the device into servers is going to take a little while: Nvidia claims that the PCI Express bus cannot provide enough bandwidth to keep the card fed, so the Tesla P100 instead uses the company's proprietary NVLink interconnect to talk to the host system at 160GB/s bidirectioanlly.

Compared to the Maxwell-based Tesla M40, the Tesla P100's specifications are considerably raised: the new design features up to 60 SM processing blocks, with the P100 boasting 56 of that possible total active and available, over the M40's 24. Clock-speed, too, has been boosted from 948MHz to 1,328MHz, the number of texture units hiked to 224 from 192, and the result is a boost in performance from 0.2 teraflops to 5.3 teraflops in 64-bit floating-point operations. Interestingly, despite having nearly double the number of transistors the Pascal P100 draws just 50W more power at a thermal design profile (TDP) of 300W.

Sadly, while Nvidia was keen to show off the Pascal device, it is less keen to offer firm availability and pricing. It has already built an example implementation, however: the DGX-1 supercomputer, which packs eight Tesla P100 boards for a total of 170 teraflops of compute performance and is priced at $129,000.

More information is available from the official website.

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.