Why You Need TRIM on Your SSD

While the worlds of CPUs and GPUs continue on an ever-upward trundle in performance, their progress has been nothing compared to the performance improvements that SSDs have achieved over conventional hard disk drives since the first real consumer products started appearing on the market eighteen months ago. It’s why they’re such an enormously exciting part of the industry right now and with demand continuing to outstrip supply and despite increasingly volatile NAND pricing, it’s clear that many enthusiasts feel the same way.As with any new technology though, and especially with one in which various design choices are so unproven, it hasn’t all been smooth sailing when it comes to the end product. SSDs manage data in a way entirely different to conventional hard disks and as time progresses, headline grabbing raw performance numbers have given way to other, more critical factors. The main concern now is an SSD's ability to maintain its paid for performance over time. This is something that until recently, SSDs have really struggled with.

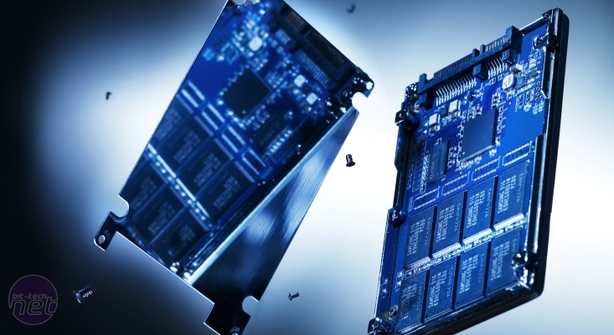

The problem stems from the way SSDs handle junk, or deleted data spread randomly across their NAND cells. Take for example a new drive and a clean 20KB cell. The drive writes two 10KB files to this space, dropping them in at its full write potential thanks to the clean NAND. If the user then deletes one of the 10KB files, the SSD, like a conventional hard disk drive, will simply mark the unwanted 10KB file as area available to be rewritten, but as with a hard drive, it will crucially not delete the now junk physical data.

Unlike a hard drive which can just write straight over that 10KB of space, when the SSD comes to rewrite data to its 10KB of “free” space in the now dirty cell, it must first read the retain the other 10KB of data to the drive’s cache or controller, wipe the whole 20KB cell of information and then rewrite the new complete set of valid data into the cell. While rewriting data has little impact on performance for a hard disk drive, this read-write-modify process can drastically reduce performance on an SSD in comparison to writing to virgin cells, and is one of the main causes of SSD performance degradation following extended heavy use.

Ironically for a device which can theoretically address any given cell at the same speed, SSDs can also become subject to fragmentation as a knock-on effect to dirtied NAND. Having numerous cells filled with junk data means the drive will need to perform more read-modify-write cycles when writing files, causing further performance degradation. A heavily fragmented drive will also be forced to spread files over many more cells, forcing the drive to address all those cells when then reading the data, so subsequently reducing total read speeds too.

The long claimed solution to these problems is the 'TRIM' command, integrated into Windows 7 since the new operating system’s launch late last year. This command reorganises written data and scrubs junk data after it’s deleted (triggered by either clearing the recycle bin or formatting the drive), meaning when the drive comes to write data to that cell again, there’s nothing but nice clean NAND waiting for it. This theoretically ensures the optimum write performance. The problem is that while the software side of TRIM has been ready and working in Windows 7 since October, it’s only in the last few months that TRIM enabled firmware updates have started to arrive, first from Indilinx, then Intel and then finally from Samsung.

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.