Nvidia GeForce 9800 GTX 512MB graphics card

Manufacturer: NvidiaUK Price (as reviewed): £210 (inc. VAT) MSRP

US Price (as reviewed): $299 (ex. Tax) MSRP

Ever since the launch of the GeForce 8800 GTX in November 2006, the discrete graphics card market—at least at the high-end—has been a one-sided affair, with Nvidia dominating proceedings.

And with that, things haven’t really progressed an awful lot – we had the GeForce 8800 Ultra come to market almost eleven months ago just a few short weeks before the launch of the ill-fated Radeon HD 2900 XT. This was nothing more than a speed-bumped GeForce 8800 GTX with a nice new cooling solution that Nvidia wanted consumers to pay over-the-odds for.

The ATI Radeon HD 3870 X2 was AMD’s next attempt to create some noise at the high-end and while it did a reasonable job, it didn’t really rumble as loudly as the troubled platform maker would have hoped. While it did win some battles, it didn’t win the war in our eyes and since last year’s saga surrounding GeForce 7950 GX2 driver support, we paid particular attention to the 3870 X2’s drivers.

The four GeForce 9800 GTX cards we were sent.

Even though Nvidia didn’t lose its overall crown, the company felt that AMD was too close for comfort because, just a couple of weeks ago, Nvidia strapped a couple of mid-range GPUs onto one graphics card—albeit with a pair of PCBs facing each other—to extend its performance lead. The problem though is that dual-GPU solutions just aren’t attractive to everyone because of the compromises associated with going down this route and also the reliance on driver support—gamers just want to play games with the best possible experience from day one, not a week or so after its release.

So fast forward to today and Nvidia is releasing the long-awaited successor to the GeForce 8800 GTX—it’ll come as no surprise to you that it’s called the GeForce 9800 GTX—and we’ve spent the last week or so giving it a thorough going over in a slew of benchmarks in Windows Vista Service Pack 1. If you're wondering how the first Vista Service Pack affects gaming performance, it's something we covered last week in quite a bit of detail.

In the run up to the launch, we received cards from BFG Tech, Leadtek, Point of View and Zotac—all are clocked at the same speeds as the reference card—and so on top of looking at the various bundles supplied by these four partners, we’ll also be investigating how one can expect a GeForce 9800 GTX to overclock later on in the article. Before we get to that though, we’ll have a look at what the GeForce 9800 GTX actually is… and how things have moved forwards since November 2006.

What's not changed...

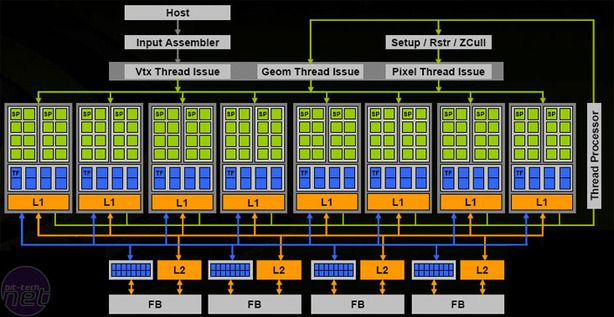

Nvidia’s GeForce 9800 GTX is based on the G92-420 GPU and features a full 128 stream processors, 64 texture units (for both addressing and filtering) and 16 ROPs. The ROPs are split into four clusters – each is able to process four pixels per clock and is also connected to a 64-bit memory interface. This means that there’s a 256-bit memory interface on the GPU and it connects out to eight 64MB GDDR3 DRAMs, making a total of 512MB of video memory. If you want a full rundown on what G92 is, please check through our GeForce 8800 GT and GeForce 8800 GTS 512MB reviews.Architectually speaking, the GeForce 9800 GTX is the same as the GeForce 8800 GTS 512MB that Nvidia released back in December. What makes this card different from an architectural technology standpoint is the clock speeds and the fact it supports HybridPower. Both the 9800 GTX and the GeForce 8800 GTS 512 support the new PureVideo technology released with the GeForce 9600 GT, although the latter still doesn’t have driver support yet – we’re waiting for Nvidia to release a 173-series Forceware driver for the other G92-based cards.

In terms of clock speeds, the reference GeForce 9800 GTX comes with a 675MHz core clock, a 1,688MHz shader clock and, while the memory frequency is set to 1,100MHz (2,200MHz effective). This gives some reasonably good theoretical throughput – doing the calculations gives around 432 GigaFLOPS of compute power, 43.2 GigaTexels per second of bilinear texture filtering throughput, a fill rate of 10,800 Megapixels per second and 70.4GB per second of memory bandwidth.

Inevitably, comparisons are going to be made between the GeForce 9800 GTX and the card it’s replacing, the GeForce 8800 GTX, and there are a number of areas where the former falls down. First of all, pixel fill rate has been reduced, which means that there may be some slowdowns at either high resolutions or when anti-aliasing is enabled. In addition to this, both memory bandwidth and memory size have been reduced as well, although there have been some improvements in Nvidia’s compression algorithms that should help to make up some of the deficit—but probably not all of it, as I still expect to see slowdowns at higher resolutions and with AA applied.

What’s more, the GeForce 9800 GTX’s shader throughput has only been increased by 25 percent over the 8800 GTX. This is a little disappointing, as we’re used to some pretty big performance jumps generation-upon-generation, but this one is a little disappointing—especially considering the memory bandwidth and size reduction. Sure, texture throughput has been increased massively, but it’s only around four percent faster than the GeForce 8800 GTS 512 in that respect.

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.