Manufacturer: Nvidia

Price (as reviewed): £528.74 (inc VAT)

Following the rumours that spread across the web last week, most people were expecting to see AMD unveil its latest R600 family of graphics cards today. Unfortunately for those desperate to see how R600 stacks up, that isn’t what you’re going to see rearing its head from the tubes this afternoon. Instead, Nvidia is back to launch its high-end refresh after GeForce 8800 GTX’s near seven month reign at the top of the tree.

It’s time to say hello to GeForce 8800 Ultra.

Nvidia hasn’t released an Ultra for two generations now, with the last one being the horribly delayed GeForce 6800 Ultra – it took far too long for that product to become available for consumers to buy. However, many would argue that the GeForce 7800 GTX 512 certainly deserved the Ultra nomenclature based on its shoddy availability.

So, GeForce 8800 Ultra has a reputation to live up to but we’re certainly hoping that history won’t repeat itself – we’re told that it’s not going to be another limited edition. However, one thing has changed with this launch: you’re not going to be able to buy the hardware by the time you’ve read the review. Instead, you’re going to have to wait a couple of weeks, as availability is scheduled for May 15th.

The company explained to us that the reason for this is because it is attempting to cut down on the number of pre-launch leaks. It’s also an attempt to prevent the bizarre instances where consumers have been able to buy the hardware days before its release, sparking Internet fame in some parts of the world (sorry guys! – Ed.).

The company explained to us that the reason for this is because it is attempting to cut down on the number of pre-launch leaks. It’s also an attempt to prevent the bizarre instances where consumers have been able to buy the hardware days before its release, sparking Internet fame in some parts of the world (sorry guys! – Ed.).

Like the GeForce 8800 GTX, there are a total of 128 1D scalar stream processors inside the 8800 Ultra. Each of these stream processors is designed to perform all of the shader operations and calculations sent to the GPU by today’s 3D graphics engines in both floating point and integer form. Also, each of G80's ALUs are capable of both a MADD and MUL instruction in the same clock cycle. In Nvidia’s GeForce 8800 GTX, the shader ALUs were clocked at 1350MHz – Nvidia has increased this clock to 1500MHz on its GeForce 8800 Ultra, which represents an 11 percent shader core frequency increase.

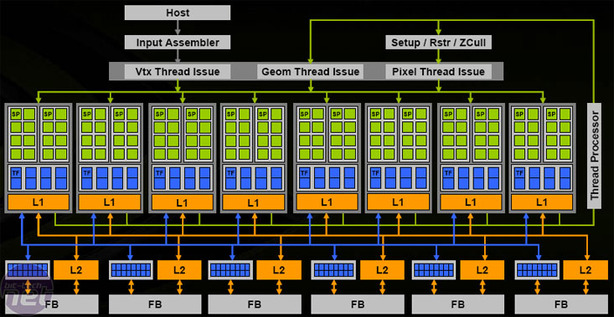

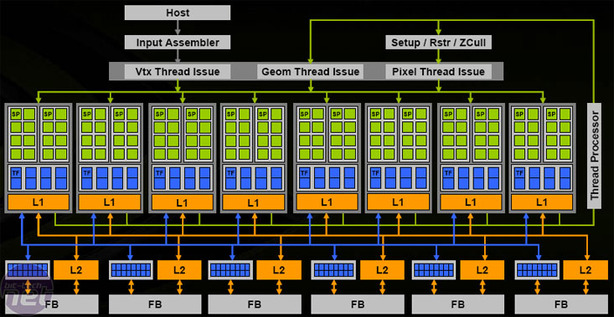

The shader processors are split into eight clusters of 16 shader processors, which share four texture address units and eight texture filtering units, along with both L1 and L2 caches. Like GeForce 8800 GTX, the Ultra features a total of 32 texture address units and 64 texture filtering units. These are completely decoupled from the stream processors, meaning that it’s possible to texture whilst still processing other shader operations. G80’s texture units run at a different clock speed to the stream processors – 612MHz on the Ultra, compared to 575MHz on the GTX.

Along with the texture units, both the render backend (ROPs) and set up engine also run at the same 612MHz clock speed. This clock frequency is what Nvidia calls the ‘core clock’ as it represents the clock speed at which most of the chip operates at. The increase isn’t quite as healthy as the shader clock, but it does represent a six and a half percent bump.

Nvidia's G80 graphics processing unit -- flow diagramThe GeForce 8800 Ultra’s render backend features total of six pixel output engines that are each capable of four pixels per clock, making a total of 24 ROPs (in traditional terms) – this is the same as GeForce 8800 GTX. With colour and Z processing, the ROPs are capable of 24 pixels per clock and with Z-only processing, the ROPs are capable of 192 pixels per clock if there is only a single sample used for each pixel.

G80’s ROPs also support simultaneous HDR and anti-aliasing with FP16 and FP32 render targets, meaning you can use all of Nvidia’s anti-aliasing algorithms with 128-bit HDR enabled. In addition to this, you also get high quality angle-independent anisotropic filtering by default. In the past, we’ve been pretty hard on Nvidia for its shoddy texture filtering quality, but thankfully the company adopted a ‘no compromises’ stance with its GeForce 8-series architecture.

Each of the pixel output engines is attached to its own 64-bit memory channel, amounting to a 384-bit memory bus width. Nvidia has increased the memory clocks from 900MHz (1800MHz effective) to 1080MHz (2160MHz), which equates to a 20 percent increase. This takes the GeForce 8800 Ultra’s memory bandwidth through the 100GB per second barrier to 103.7GB per second, which comes thanks to the Samsung BJ08 GDDR3 DRAM chips on the board, which are rated to 2200MHz.

The increased clock speeds aren’t all that has changed with GeForce 8800 Ultra though, as Nvidia has been busy making efficiency improvements to the 90-nanometre manufacturing process it is using. As a result, GeForce 8800 Ultra actually uses less power than the GeForce 8800 GTX. At launch, GeForce 8800 GTX had an 185W maximum TDP and over the past seven months, Nvidia has managed to get that down to 177W. However, has gone one step further with GeForce 8800 Ultra because, despite the higher clocks, its maximum TDP is rated at 175W.

Without further ado, let’s have a look at some hardware...

Price (as reviewed): £528.74 (inc VAT)

Following the rumours that spread across the web last week, most people were expecting to see AMD unveil its latest R600 family of graphics cards today. Unfortunately for those desperate to see how R600 stacks up, that isn’t what you’re going to see rearing its head from the tubes this afternoon. Instead, Nvidia is back to launch its high-end refresh after GeForce 8800 GTX’s near seven month reign at the top of the tree.

It’s time to say hello to GeForce 8800 Ultra.

Nvidia hasn’t released an Ultra for two generations now, with the last one being the horribly delayed GeForce 6800 Ultra – it took far too long for that product to become available for consumers to buy. However, many would argue that the GeForce 7800 GTX 512 certainly deserved the Ultra nomenclature based on its shoddy availability.

So, GeForce 8800 Ultra has a reputation to live up to but we’re certainly hoping that history won’t repeat itself – we’re told that it’s not going to be another limited edition. However, one thing has changed with this launch: you’re not going to be able to buy the hardware by the time you’ve read the review. Instead, you’re going to have to wait a couple of weeks, as availability is scheduled for May 15th.

The company explained to us that the reason for this is because it is attempting to cut down on the number of pre-launch leaks. It’s also an attempt to prevent the bizarre instances where consumers have been able to buy the hardware days before its release, sparking Internet fame in some parts of the world (sorry guys! – Ed.).

The company explained to us that the reason for this is because it is attempting to cut down on the number of pre-launch leaks. It’s also an attempt to prevent the bizarre instances where consumers have been able to buy the hardware days before its release, sparking Internet fame in some parts of the world (sorry guys! – Ed.).G80 with Go Faster Stripes:

As has been the case in the past, Nvidia’s GeForce 8800 Ultra is essentially a GeForce 8800 GTX with go faster stripes and a few tweaks to the manufacturing process in its A3 revision silicon. If you’re familiar with the architecture behind Nvidia’s G80 graphics processing unit, there’s not much more to learn about the Ultra – it’s merely a clock speed bump.Like the GeForce 8800 GTX, there are a total of 128 1D scalar stream processors inside the 8800 Ultra. Each of these stream processors is designed to perform all of the shader operations and calculations sent to the GPU by today’s 3D graphics engines in both floating point and integer form. Also, each of G80's ALUs are capable of both a MADD and MUL instruction in the same clock cycle. In Nvidia’s GeForce 8800 GTX, the shader ALUs were clocked at 1350MHz – Nvidia has increased this clock to 1500MHz on its GeForce 8800 Ultra, which represents an 11 percent shader core frequency increase.

The shader processors are split into eight clusters of 16 shader processors, which share four texture address units and eight texture filtering units, along with both L1 and L2 caches. Like GeForce 8800 GTX, the Ultra features a total of 32 texture address units and 64 texture filtering units. These are completely decoupled from the stream processors, meaning that it’s possible to texture whilst still processing other shader operations. G80’s texture units run at a different clock speed to the stream processors – 612MHz on the Ultra, compared to 575MHz on the GTX.

Along with the texture units, both the render backend (ROPs) and set up engine also run at the same 612MHz clock speed. This clock frequency is what Nvidia calls the ‘core clock’ as it represents the clock speed at which most of the chip operates at. The increase isn’t quite as healthy as the shader clock, but it does represent a six and a half percent bump.

Nvidia's G80 graphics processing unit -- flow diagram

G80’s ROPs also support simultaneous HDR and anti-aliasing with FP16 and FP32 render targets, meaning you can use all of Nvidia’s anti-aliasing algorithms with 128-bit HDR enabled. In addition to this, you also get high quality angle-independent anisotropic filtering by default. In the past, we’ve been pretty hard on Nvidia for its shoddy texture filtering quality, but thankfully the company adopted a ‘no compromises’ stance with its GeForce 8-series architecture.

Each of the pixel output engines is attached to its own 64-bit memory channel, amounting to a 384-bit memory bus width. Nvidia has increased the memory clocks from 900MHz (1800MHz effective) to 1080MHz (2160MHz), which equates to a 20 percent increase. This takes the GeForce 8800 Ultra’s memory bandwidth through the 100GB per second barrier to 103.7GB per second, which comes thanks to the Samsung BJ08 GDDR3 DRAM chips on the board, which are rated to 2200MHz.

The increased clock speeds aren’t all that has changed with GeForce 8800 Ultra though, as Nvidia has been busy making efficiency improvements to the 90-nanometre manufacturing process it is using. As a result, GeForce 8800 Ultra actually uses less power than the GeForce 8800 GTX. At launch, GeForce 8800 GTX had an 185W maximum TDP and over the past seven months, Nvidia has managed to get that down to 177W. However, has gone one step further with GeForce 8800 Ultra because, despite the higher clocks, its maximum TDP is rated at 175W.

Without further ado, let’s have a look at some hardware...

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.