Overclocking:

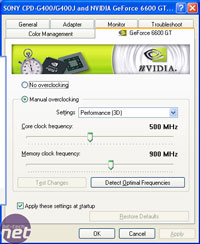

We took the time to overclock this video card, to give an idea of how much extra performance there is under the hood. As usual, this may not represent realistic clocks that could be achieved by all GeForce 6600GT's on AGP, but it should give a good indication. We managed to overclock the video card from its default clock speeds up to 556/1100MHz, which isn't too bad when you consider that the memory is only rated to 1000MHz - we've gained 200MHz on the memory clocks and a healthy 56MHz on the core.

The increase in clock speeds allowed us to increase the resolution in Doom 3, from 1280x1024 2xAA 8xAF to 1600x1200 0xAA 8xAF, while still delivering an average frame rate of around 50 frames per second, while also keeping a very playable minimum frame rate of 25 frames per second in our test system. We could now achieve a smooth and enjoyable gaming experience in Doom 3 at 1600x1200. Also, in Far Cry, it allowed us to increase the resolution to 1280x1024 with 4xAF at the same in-game detail settings that we used for our manual run through on this video card.

Final Thoughts:

We have slightly mixed feelings about the GeForce 6600GT on AGP at the moment, albeit due to a number of rather telling issues that we came across. These issues fall under the heading of driver bugs - there are a number of compelling issues that we see as required fixes to poor rendering quality.The hitching issue that we discussed in our FarCry 1.3 evaluation is still there in the new ForceWare 66.93 drivers, which can prove to be an annoying problem. There is also the over bright issue on the roof of the factory - we still feel that this doesn't look quite right on either vendor’s video driver. Neither of the last two driver versions of ATI's Catalyst driver, and NVIDIA's ForceWare driver has improved the quality of the scene being rendered.

Also we discussed the poor and blurred image quality in Need For Speed: Underground 2 - the blurred image quality was only apparent on the GeForce 6600GT, but we have noticed it before on the two GeForce 6800GT's which we evaluated last week. We will investigate this matter further, as we feel that there is a possibility that the game is forcing the drivers to use the out-of-favour Quincunx Anti-Aliasing, which actually provides a rather blurred scene. There was also the issue of poor quality sky on both video cards, this isn't anything new though, as we came across this during our high-end roundup - we can hope that this is a demo-specific image quality problem, and that it will be fixed in the upcoming release of the full game.

There are questions to be asked about the reduced memory clocks, and we are more than a little curious about the reasoning behind this. There are several explanations behind NVIDIA's decision to reduce the memory clocks by 100MHz on the AGP version of the GeForce 6600GT. The obvious reason would be for cost, due to the additional board components like the HSI bridge chip, which inevitably increase the costs to manufacture the video card.

While we would expect something to be cut back on, there doesn't appear to be anything apart from the clock speed deficit. The memory that has been used is the same 2.0ns memory that can be found on the PCI-Express version of this card, so the physical costs for the modules will be the same in both cases. The other major explanation behind the reduced memory clocks could be NVIDIA's way of dangling the proverbial PCI-Express carrot to its customers - they will only get the highest performance by moving over to PCI-Express. This could prove to be an incentive for users to upgrade to the PCI-Express platform; however, we are still awaiting motherboards on the Athlon 64 front, so we feel that the PCI-Express uptake isn't going to move as fast as both ATI and NVIDIA would hope, at least for the moment.

It will be interesting to see what board partners decide to do, as there are likely to be a few board partners who differ from NVIDIA's reference clock speeds. We may well see AGP versions of the GeForce 6600GT clocked to 500/1000MHz, but only time will tell at this early stage.

Final, Final Thoughts:

On the whole, the performance delivered by the GeForce 6600GT is class leading, but due to a number of poor image quality issues, we found that the real-world gaming experience delivered by the GeForce 6600GT wasn't everything that it could be. Game play was smooth in most titles, with the exception of FarCry, where our hitching issues came back to haunt us in the new release of the ForceWare driver. The real downer was the poor image quality in NFS: Underground 2; at present we are not 100% sure of the cause for the blurred appearance, but we will be investigating this further. Look out for some image quality examinations in the discussion thread over the next day or so.The GeForce 6600GT on AGP has all the ingredients to take the lead as the best bang for buck video card in the mainstream. It does suffer from the image quality issues that we have mentioned above, and we hope that new drivers will fix these bugs and confirm the GeForce 6600GT's position as the king of mainstream AGP video cards. With a serious lack of presence from ATI's own bridge chip, RIALTO, and therefore no AGP Radeon X700 XT, we expect the GeForce 6600GT to hold on to the mainstream AGP top spot for a good while yet - it has the performance advantage over the Radeon 9800 Pro, and we feel that it is only a matter of time for the image quality in Need For Speed: Underground 2 to catch up. On the grounds that this will be fixed over the coming weeks, we feel that the GeForce 6600GT AGP is well worth your hard-earned cash in favour of the ageing Radeon 9800 Pro - it will deliver a consistently better gaming experience.

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.