But wait, there’s more!

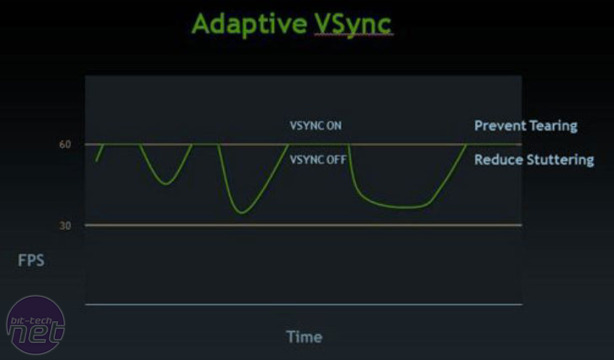

As well as a whole new architecture and GPU Boost, Nvidia has also added a number of new technologies into the Kepler GPU. Perhaps the most important is the addition, finally, of single GPU Nvidia Surround, allowing users to power up to four monitors with a single card. It’s admittedly still not as well developed as AMD’s competing Eyefinity Technology when it comes to setup or game support (using displays with different resolutions caused us issues and game UIs are still spread over all three monitors), but there are plenty of signs this a technology Nvidia will now take more seriously. Custom resolutions and bezel correction are all in place, and there’s the added benefit of being able to combine Nvidia Surround with 3D Vision should you grab yourself three 120Hz panels and an oh-so-stylish pair of 3D vision glasses.Also new with the GTX 680 2GB is Adaptive Vsync, a technology that looks to avoid the judder effect when frame rates drop below the 60Hz V-Sync. When Vysnc is enabled, the frame rate can only be equal to the display’s refresh rate, or a fractional value of the frame rate. This means that, should your frame rate drop below 60fps on a 60Hz display, Vsync forces the frame rate to 30fps, then 20fps, then 15fps, then 12 fps and so on. The transfer between these frame rates, particularly the 60fps-to-30fps drop causes distracting image judder.

Adaptive VSync, enabled in the Nvidia control panel, automatically disables Vysnc should the frame rate dip below the monitor’s refresh rate, then re-enables it once performance climbs back above 60fps. The result is the elimination of image tearing that standard Vysnc delivers when frame rates are above 60fps, and none of the judder when frame rates dip below 60fps.

Click to enlarge - Adaptive Vsync provides the benefits of Vysnc, without the judder and steep frame rate drops

FXAA and TXAA

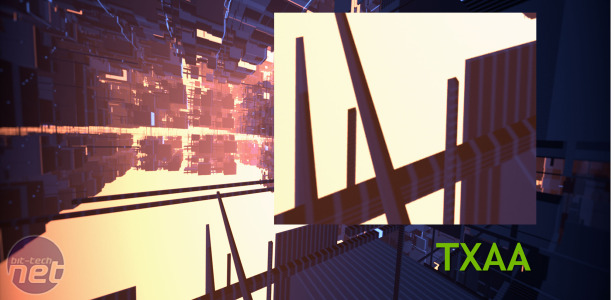

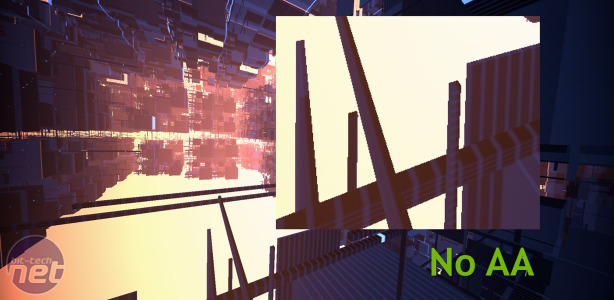

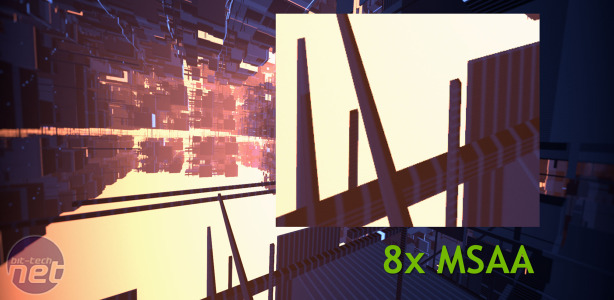

If you’ve played BF3 then you’ll likely have spotted the FXAA option in the graphics settings. FXAA is a form of anti-aliasing applied as an image filter rather than being a true form of multi-sample anti-aliasing. This has the benefit of requiring a much lower performance overhead, while still delivering a substantial increase in image quality over no anti-aliasing at all. With the new R300 family of Nvidia drivers, FXAA can now be enabled in the Nvidia control panel. While we’ve no doubt that FXAA offers inferior image quality to MSAA, often making for a rather smeary image, its much smaller performance hit and incredibly fast adoption rate amongst game developers makes FXAA a useful tool.More interesting is Nvidia’s new form of anti-aliasing, dubbed TXAA (T standing for temporal as there’s an ‘optional temporal component for better image quality’). TXAA is a combination of both MSAA and FXAA, and provides the image quality increase of true MSAA with the mild performance reduction of FXAA. Available as two modes, Nvidia claims that TXAA 1 offers image quality comparable to 8xMSAA, but with a performance hit similar to that of 2xMSAA, while TXAA offers image quality in excess of 8xMSAA with a performance hit similar to that of 4xMSAA.

Click to enlarge - TXAA offers similar image quality to 8xMSAA, with a much smaller performance hit by combining MSAA and FXAA

While it’s effectively 2xMSAA and 4xMSAA combined with a healthy dollop of FXAA, the results are impressive. While we usually dismiss new kinds of AA, particularly as industry-wide adoption is so rare, Nvidia has been quick to sign software developers up to TXAA, and claims that MechWarrior Online, Secret World, Eve Online, Borderlands 2, Unreal 4 Engine, BitSquid, Slant Six Games, and Crytek have all signed up to implement TXAA.

Right, that’s the architecture side sorted; let’s take a look at the card itself...

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.