Nvidia GeForce GTX 480 1,536MB Review

Manufacturer: NvidiaUK Price (as reviewed): £420 MSRP

US Price (as reviewed): $499

There have been few products in recent memory that have carried as much hype and expectation as Nvidia’s new line of DirectX 11 GF100 “Fermi” graphics cards. Following the positive reception of ATI’s DirectX 11 range last September and ATI’s subsequent monopoly on DirectX 11 graphics as its range increased with a relentless march of new models, the ball has been firmly placed in Nvidia’s court.

News about Fermi arrived in dribs and drabs over the months - notable highlights including Nvidia CEO Jen-Hsun Huang showing off a prototype card at GTC only for us to discover it was a mock-up, and as the months rolled by, numerous other rumours and leaks continued to sow seeds of uncertainty in the minds of the GPU buying public. It didn't all work against Nvidia though - where some saw the delays as signs of a product in trouble, there were plenty of others who held off from buying a new graphics card until Nvidia played its hand.

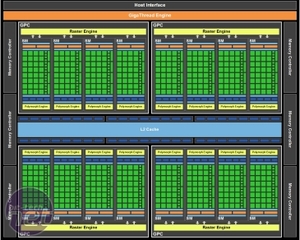

Well, just over six months to the day since the release of the Radeon HD 5870 and the wait is over; today (tonight if you're in the UK) sees the launch of Fermi, in the shape of Nvidia's new flagship card featuring its first DirectX 11 GPU: the GeForce GTX 480. On paper at least it’s an absolute monster. While we’ll be taking a much more indepth look into the GF100 architecture in an upcoming article, the GF100’s specs are certainly impressive – 480 stream processors, double that of the GTX 285 (but down from Fermi’s stated maximum of 512, which upcoming Tesla cards will boast), split into 15 streaming multiprocessors and running at 700MHz make this GPU an absolute brute, especially given its 40nm production process.

Alongside the stream processors are 60 texture units and 48 ROP units; the GPU is partnered with 1,536MB of GDDR5 (the first time an Nvidia card has used GDDR5), operating on a 384-bit memory interface and clocked at 924MHz (3696MHz effective), resulting in a peak memory bandwidth of 177.4GB/s, an eleven per cent improvement over the GDDR3 GeForce GTX 285.

It’s difficult to directly compare the GTX 480 to its predecessor though, as the new Fermi architecture is arguably the biggest change in Nvidia’s GPUs since the launch of G80 back in 2006. With the new requirements inherent in DirectX 11 an entirely new front end has been developed to accommodate the challenges of tessellation, with a much heavier emphasis on geometry as well as more traditional pixel shading. What this means to real world performance we’ll investigate in our testing, but it’s no longer as simple as directly comparing the specs sheets.

What’s certain though is that the despite the 40nm production process, long associated with low power consumption and reasonable thermals thanks to ATI’s Evergreen family of GPUs, this card is extraordinarily tough on power consumption and thermal output. Nvidia rates the GeForce GTX 480 at 250W max board power, an unrivalled amount for a single GPU graphics card - in comparison the HD 5870 is rated at 188W max board power.

Nvidia is also offering a raft of improvements to the existing GPGPU CUDA feature set with Fermi. PhysX support is of course included, but has been optimised to allow the GPU to more efficiently switch between physics and compute kernels, as well as be able to execute multiple small physics kernels in parallel. This potentially significantly reduces the impact on performance when switching between GPGPU instructions such as PhysX or GPU AI and rendering, although few games released recently (barring Metro 2033) have chosen to take advantage of Nvidia’s CUDA enhancements.

Another new addition is the added support for Nvidia 3D surround. Combining Nvidia’s existing stereoscopic 3D vision technology with a three monitor setup similar to ATI’s Eyefinity we had chance to briefly try the setup when at CEBIT. While impressed with the overall technology, the setup requires you to run two cards in SLI in order to get three outputs required (as Nvidia cards are limited to 2 concurrent displays) and as with Eyefinity, we’re still concerned by the issues of bezels obscuring the view, which particularly disrupts the 3D effect.

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.