Have you ever wondered what all of the fuss is about when it comes to memory? Memory manufacturers are going out of their way to sell you what they consider to be the best memory on the market for gamers, while also trying to push you into spending more money on more memory because - according to the memory makers - 2GB of memory is now becoming the industry standard for gaming systems.

The question is, whether doubling your current 1GB configuration to 2GB will be beneficial to your gaming experience or not. There are several options to take, too. Should you keep your current 512MB DIMMs and add another two to give you a total of 2GB, accepting the drawbacks (if there are any) from using the 2T memory timing?

Or, should you attempt to sell your current modules and purchase a pair of 1GB DIMMs?

Alternatively, could you get away with just sticking with your existing setup?

There are so many options on the market today, and we're going to attempt to answer these questions over the next few pages, while deconstructing some of the confusion that surrounds memory timings.

Fred's driving along the road in his car, he spots something in the road and has to stop in a hurry, how long does it take for him to stop? There are two important components to this problem. The first is the most obvious (assuming something about his brakes, tyres and road surface) his speed will determine how far he travels when he presses the brake pedal.

The second, more subtle component, is his reaction time. How long it takes him to press that pedal. Actual experienced memory bandwidth is determined by two analogous factors; how quickly data can be transferred from the memory (memory speed), and how long it takes this transfer to start (memory latency).

The second, more subtle component, is his reaction time. How long it takes him to press that pedal. Actual experienced memory bandwidth is determined by two analogous factors; how quickly data can be transferred from the memory (memory speed), and how long it takes this transfer to start (memory latency).

Here's a quick primer in memory jargon:

Modern processors transmit 8 bits of data on every clock cycle, and all Athlon 64 and the older Socket 478 Pentium 4 CPUs run with a 200MHz memory bus. Newer Intel Pentium CPUs that use the LGA775 socket use either a 266MHz or 333MHz memory bus speed. The memory bus speed depends on whether they're an Extreme Edition or not - standard Pentium CPUs use a 266MHz memory bus, while Extreme Editions use a 333MHz bus.

If you multiply 400 (200 times 2 as Double Data Rate (DDR) memory runs at twice the clock speed) by 8, and you get a theoretical maximum figure of 3200Mbits/s transfer - hence the memory rating speed PC3200 found on the label of most new sticks of DDR memory. With the newer Pentium CPUs, you will see modules labelled with PC2-4200 (DDR2-533), PC2-5400 (DDR2-667) and modules up to PC2-8000 (DDR2-1000).

tRAS is the time required between the bank active command and the precharge command. Or in simpler terms, how long the module must wait before the next memory access can start. It doesn't have a great impact on performance, but it can impact system stability if set incorrectly. The optimal setting ultimately depends on your platform - the best thing to do is to run Memtest86 on your system with variable tRAS settings to find the fastest setting for your system.

The tRCD timing relates to the number of clock cycles taken between the issuing of the active command and the read/write command. In this time, the internal row signal settles enough for the charge sensor to amplify it. The lower this is set, the better - the optimal setting is either 2 or 3, depending on how capable your memory is. As with any other memory timing, setting this too low for your memory can cause in system instabilities.

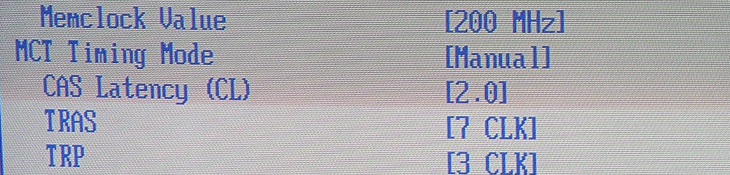

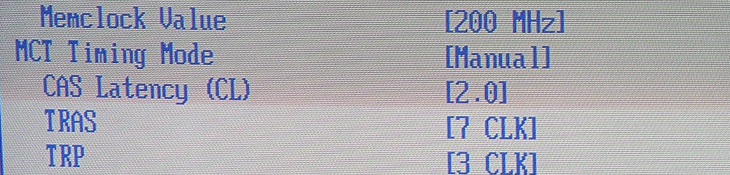

CAS Latency is the delay, in clock cycles, between sending a READ command and the moment the first piece of data is available on the outputs. Setting CAS to 2.0 seems to be the holy grail with memory manufacturers, but the difference between tight timings and high memory bus speeds is an arguement that we hope to settle over the course of this article.

The tRP timing is the number of clock cycles taken between the issuing of a precharge command and the active command. It could also be described as the delay required between deactivating the current row and selecting the next row. In conjunction with the tRCD timing, which relates to the time taken between the issuing of the active command and the read/write command, the time required to switch banks (or rows) and then select the next cell for reading/writing or refreshing is a combination of the two timings.

The Command Rate timing is another timing that is important to maximum theoretical memory bandwidth. It's the time needed between when a chip is selected and when commands can be issued to the selected chip. Typically, these are either 1 or 2 clocks, depending on a number of factors including the number of memory modules installed, the number of banks and the quality of the modules you've purchased. The majority of memory available today is claimed to run at the faster 1T memory timing.

Memory latencies are normally quoted in the following format, CAS-tRP-rRCD-tRAS Command Rate, an example being 3.0-4-4-8 1T, with the numbers corresponding to the individual latencies quoted in clock cycles. Lower numbers are better, though in theory tRAS should be tRCD added to CAS Latency plus 2.

Each memory call might take a few more clock cycles to achieve, but you can shift more data to the CPU in the same time and those clock cycles are shorter. By the same token, you may find that using the play to lower the latencies might make things a little faster than just using brute force to up the MHz. This is where the skill lies in the dark art of overclocking.

However, when 512MB modules were the norm, everyone went out and purchased a pair of 512MB DIMMs to run in dual channel. Now that 1GB modules are said to be the industry standard for gaming systems, we felt that it'd be worth running a little experiment to see whether it's worth selling your current pair of 512MB modules and getting a couple of 1GB modules. Or, are you best saving a bit of money by keeping hold of your current memory and adding another two 512MB modules to them to give you the same 2GB of memory with the consequence of running them at 2T with your Athlon 64? Alternatively, should you disregard the marketing hype and keep hold of your two 512MB memory modules?

The question is, whether doubling your current 1GB configuration to 2GB will be beneficial to your gaming experience or not. There are several options to take, too. Should you keep your current 512MB DIMMs and add another two to give you a total of 2GB, accepting the drawbacks (if there are any) from using the 2T memory timing?

Or, should you attempt to sell your current modules and purchase a pair of 1GB DIMMs?

Alternatively, could you get away with just sticking with your existing setup?

There are so many options on the market today, and we're going to attempt to answer these questions over the next few pages, while deconstructing some of the confusion that surrounds memory timings.

What does all of the jargon mean?

In the current computer market, where comprehending the intricacies of the latest generation of videos cards requires a great deal of stamina, it's easy to be deceived by memory and its many names - it's actually one of the simplest components in your system, and understanding what's best is easy.Fred's driving along the road in his car, he spots something in the road and has to stop in a hurry, how long does it take for him to stop? There are two important components to this problem. The first is the most obvious (assuming something about his brakes, tyres and road surface) his speed will determine how far he travels when he presses the brake pedal.

Here's a quick primer in memory jargon:

Memory Speed:

The link between the CPU and memory is called the memory bus. Often, it runs at the same speed as the Front Side Bus (FSB), which regulates the communication between the CPU and lots of other system components. The newer Intel Pentium Processors seem to run better with the memory using an asynchrous 4:5 memory divider, meaning that the memory is running faster than the front side bus. The bus speeds are measured in MHz, or million clock cycles (CC's) per second.Modern processors transmit 8 bits of data on every clock cycle, and all Athlon 64 and the older Socket 478 Pentium 4 CPUs run with a 200MHz memory bus. Newer Intel Pentium CPUs that use the LGA775 socket use either a 266MHz or 333MHz memory bus speed. The memory bus speed depends on whether they're an Extreme Edition or not - standard Pentium CPUs use a 266MHz memory bus, while Extreme Editions use a 333MHz bus.

If you multiply 400 (200 times 2 as Double Data Rate (DDR) memory runs at twice the clock speed) by 8, and you get a theoretical maximum figure of 3200Mbits/s transfer - hence the memory rating speed PC3200 found on the label of most new sticks of DDR memory. With the newer Pentium CPUs, you will see modules labelled with PC2-4200 (DDR2-533), PC2-5400 (DDR2-667) and modules up to PC2-8000 (DDR2-1000).

Memory Latency:

Addressing memory is much like reading from a large, multiple page spreadsheet. It doesn't matter how quickly you can read, before you can start you have to find the page the data you want is on (this is known as tRAS), work your way to the row and column the data's stored on (tRCD), when you've found the cell you want it takes some time before you start reading (CAS) and when you get to the end of a row you have to switch to the next, which takes time (tRP).tRAS is the time required between the bank active command and the precharge command. Or in simpler terms, how long the module must wait before the next memory access can start. It doesn't have a great impact on performance, but it can impact system stability if set incorrectly. The optimal setting ultimately depends on your platform - the best thing to do is to run Memtest86 on your system with variable tRAS settings to find the fastest setting for your system.

The tRCD timing relates to the number of clock cycles taken between the issuing of the active command and the read/write command. In this time, the internal row signal settles enough for the charge sensor to amplify it. The lower this is set, the better - the optimal setting is either 2 or 3, depending on how capable your memory is. As with any other memory timing, setting this too low for your memory can cause in system instabilities.

CAS Latency is the delay, in clock cycles, between sending a READ command and the moment the first piece of data is available on the outputs. Setting CAS to 2.0 seems to be the holy grail with memory manufacturers, but the difference between tight timings and high memory bus speeds is an arguement that we hope to settle over the course of this article.

The tRP timing is the number of clock cycles taken between the issuing of a precharge command and the active command. It could also be described as the delay required between deactivating the current row and selecting the next row. In conjunction with the tRCD timing, which relates to the time taken between the issuing of the active command and the read/write command, the time required to switch banks (or rows) and then select the next cell for reading/writing or refreshing is a combination of the two timings.

The Command Rate timing is another timing that is important to maximum theoretical memory bandwidth. It's the time needed between when a chip is selected and when commands can be issued to the selected chip. Typically, these are either 1 or 2 clocks, depending on a number of factors including the number of memory modules installed, the number of banks and the quality of the modules you've purchased. The majority of memory available today is claimed to run at the faster 1T memory timing.

Memory latencies are normally quoted in the following format, CAS-tRP-rRCD-tRAS Command Rate, an example being 3.0-4-4-8 1T, with the numbers corresponding to the individual latencies quoted in clock cycles. Lower numbers are better, though in theory tRAS should be tRCD added to CAS Latency plus 2.

The Interaction:

Ideally, then, you want memory that can perform at the maximum number of MHz - to speed up the memory bus - while maintaining the lowest latencies. There are trade-offs to be had, however. Enthusiast memory often has a little bit of 'play' built in to the speeds, meaning that you can increase the speed of the memory bus, letting you run at 500MHz or more, if you let the latencies slip a bit.Each memory call might take a few more clock cycles to achieve, but you can shift more data to the CPU in the same time and those clock cycles are shorter. By the same token, you may find that using the play to lower the latencies might make things a little faster than just using brute force to up the MHz. This is where the skill lies in the dark art of overclocking.

The Marketing:

Lots of companies make RAM that they say is great for enthusiasts. They claim that it will allow your system to run a faster memory bus, boosting overall system speed and that lower timings will increase your memory bandwidth, and that added overclockability will make your rig fly. They also claim that more memory is better.However, when 512MB modules were the norm, everyone went out and purchased a pair of 512MB DIMMs to run in dual channel. Now that 1GB modules are said to be the industry standard for gaming systems, we felt that it'd be worth running a little experiment to see whether it's worth selling your current pair of 512MB modules and getting a couple of 1GB modules. Or, are you best saving a bit of money by keeping hold of your current memory and adding another two 512MB modules to them to give you the same 2GB of memory with the consequence of running them at 2T with your Athlon 64? Alternatively, should you disregard the marketing hype and keep hold of your two 512MB memory modules?

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.