Nvidia GeForce GTX 1660 Ti Review feat. Palit StormX

February 22, 2019 | 14:00

For PC gamers dismayed at the seemingly ever increasing cost of their hobby, Nvidia’s RTX family of graphics cards has been a huge disappointment. With costs of equivalent-tier parts rising yet again this generation – to the point where the flagship GeForce part, the RTX 2080 Ti, came in at £1,100 – the masses have by and large been left out. Even for those lucky enough to be able to afford the buy-in, much of the cost of entry has undoubtedly been funding specialised cores inherited from the workstation space and dedicated to advanced rendering techniques (real-time ray tracing and DLSS) that few games have managed to utilise, even now.

The GTX 1660 Ti is the start of Nvidia’s answer to the cries of crestfallen PC gamers that aren’t interested in paying for hardware that can only be fully leveraged in a handful of titles, and would instead prefer a GPU that is faster all-round and has more usable features as well as a more realistic price tag – you know, the way upgrades used to work.

Astonishingly, this is Nvidia’s first new GPU launch for the sub-£300 market in over two and a half years (excluding GT parts and refreshes), with the original GTX 1060 6GB having launched in July 2016. AMD, meanwhile, has struggled to capitalise on this absence of green, content instead with simply hitting F5, and has only released refreshed Polaris (RX 580/RX 570) and, well, re-refreshed Polaris cards with RX 590. It’s been a thoroughly unexciting time, hasn’t it?

To be clear, then, GTX 1660 Ti uses a brand new GPU built using Turing CUDA cores but without any RT Cores (used for ray tracing) or Tensor Cores (used for AI processing and DLSS). Both of the latter are necessary for Nvidia’s ‘Hybrid Rendering’ technique (measured by the made up “RTX-OPS” unit) that games like Battlefield V and Metro Exodus are just now starting to realise, and they are also responsible for the RTX naming. Without these cores, then, we’re back to familiar GTX territory (and TFLOPs – remember those?!), though as to where the ‘16’ came from, your guess is as good as ours – answers on a postcard in the comments, please.

GTX 1660 Ti is also a clear admission that the full Turing architecture seen thus far doesn’t scale downwards as far as Nvidia might like, and just as clear a confirmation that Hybrid Rendering isn’t ready for mass adoption. The RT and Tensor Cores apparently require a certain level of traditional GPU horsepower to be effective in games. Evidently, that level is one Nvidia feels unable to reach at the sub-$300 price point, and it has thus decided to invest in designing and building a GPU that excludes them instead. Although official confirmation of other parts is non-existent, we fully expect this approach to be scaled downwards, since anything coming in below GTX 1660 Ti also won’t meet the performance threshold to make RT and Tensor cores “worth it”. Similarly, we expect the GTX 1660 Ti to be the Turing GTX flagship, since scaling this method upwards is highly likely to eat into RTX sales and confuse consumers even more.

Time for a shiny new spec table, don’t you think?

| Nvidia GeForce RTX 2080 Ti | Nvidia GeForce RTX 2080 | Nvidia GeForce RTX 2070 | Nvidia GeForce RTX 2060 | Nvidia GeForce GTX 1660 Ti | Nvidia GeForce GTX 1060 6GB | |

| Architecture | Turing (RTX) | Turing (RTX) | Turing (RTX) | Turing (RTX) | Turing (GTX) | Pascal |

| Codename | TU102 | TU104 | TU106 | TU106 | TU116 | GP106 |

| Base Clock | 1,350MHz | 1,515MHz | 1,410MHz | 1,365MHz | 1,500MHz | 1,506MHz |

| Boost Clock | 1,545MHz | 1,710MHz | 1,620MHz | 1,620MHz | 1,770MHz | 1,708MHz |

| Layout | 6 GPCs, 68 SMs | 6 GPCs, 46 SMs | 3 GPCs, 36 SMs | 3 GPCs, 30 SMs | 3 GPCs, 24 SMs | 2 GPCs, 10 SMMs |

| CUDA Cores | 4,352 | 2,944 | 2,304 | 1,920 | 1,536 | 1,280 |

| Tensor Cores | 544 | 368 | 288 | 240 | N/A | N/A |

| RT Cores | 68 | 46 | 36 | 30 | N/A | N/A |

| Texture Units | 272 | 184 | 144 | 120 | 96 | 80 |

| ROPs | 88 | 64 | 64 | 48 | 48 | 48 |

| L2 Cache | 6MB | 4MB | 4MB | 3MB | 1.5MB | 1.5MB |

| Peak TFLOPS (FP32) | 13.4 | 10 | 7.5 | 6.2 | 5.5 | 4.4 |

| Transistors | 18.6 billion | 13.6 billion | 10.8 billion | 10.8 billion | 6.6 billion | 4.4 billion |

| Die Size | 754mm2 | 545mm2 | 445mm2 | 445mm2 | 284mm2 | 200mm2 |

| Process | 12nm FFN | 12nm FFN | 12nm FFN | 12nm FFN | 12nm FFN | 16nm |

| Memory | 11GB GDDR6 | 8GB GDDR6 | 8GB GDDR6 | 6GB GDDR6 | 6GB GDDR6 | 6GB GDDR5 |

| Memory Data Rate | 14Gbps | 14Gbps | 14Gbps | 14Gbps | 12Gbps | 8Gbps effective |

| Memory Interface | 352-bit | 256-bit | 256-bit | 192-bit | 192-bit | 192-bit |

| Memory Bandwidth | 616GB/s | 448GB/s | 448GB/s | 336GB/s | 288GB/s | 192GB/sec |

| TDP | 250W | 215W | 175W | 160W | 120W | 120W |

TU116 is a newly manufactured GPU built on the same 12nm FinFET process as other Turing cards. It comes fully enabled in GTX 1660 Ti and brings Turing down to its smallest die size yet.

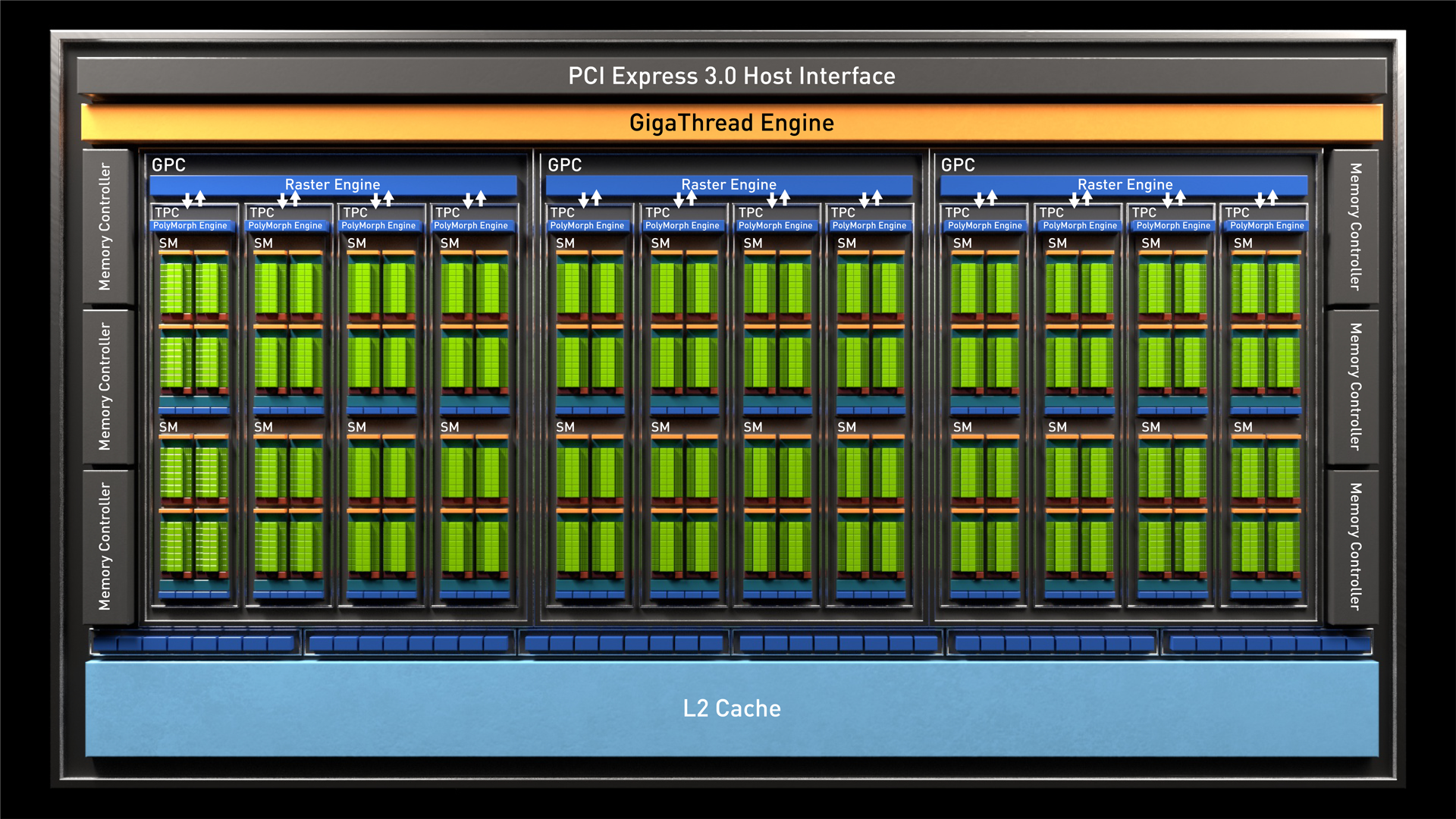

At a high level the structure of the new GPU remains the same as other Turing parts, with Graphics Processing Clusters (GPCs) subdivided into Texture Processing Clusters (TPCs, four per GPC here) and each TPC housing two TU116 Streaming Multiprocessors, or SMs. We discuss the Turing architecture in detail here, and it’s worth a read if you want to fully understand the less detailed overview below.

At the SM level is where things look different, and we believe this is the first time Nvidia has produced two physically different SMs for the same architecture. This is a pretty big deal in itself given the obvious cost overheads of designing, engineering, and validating another semi-new SM design, but Nvidia obviously believes it to be worth it instead of the alternative i.e. dedicating silicon to cores that would be functionally useless on lower-end Turing parts.

First up, the RT Core is gone entirely from the new SM, and this simply means no real-time ray tracing support in the few games that support or are set to support it – no big loss at this price level.

Next, the Tensor Cores also get the chop, although it’s more accurate to say they’ve been stripped back. Tensor Cores accelerate complex matrix-based FP16 math, and this functionality is now removed, so Nvidia’s DLSS technique is a no-go. However, simpler FP16 operations are used in games for low-precision effects (Nvidia gives Far Cry 5’s water simulation as an example), and RTX Turing parts rely on FP16 units embedded in the Tensor Cores to process these at twice the normal FP32 throughput. All 128 of these FP16 units remain, and thus so does the doubled FP16 throughput.

Beyond those two differences, the SM remains the same. The concurrent floating point (FP32) and integer (INT32) pathways are maintained with 64 FP32 cores matched by 64 INT32 cores per SM, allowing parallel execution of different instruction types instead of one after the other as with Pascal GPUs. The same four Texture Units are present, and the new unified architecture for shared memory, L1, and texture caching is also retained, with a 96KB pool available for each SM.

It’s also worth noting that the TU116 GPU supports Nvidia’s new variable rate shading techniques, where certain parts of a scene can have their pixel shading rate reduced without a reduction in perceived quality.

The concurrent integer pathway, reworked SM cache, and variable rate shading are the three big factors in Turing being faster than Pascal in traditional gaming workloads. It’s also why Nvidia’s own figure suggests ‘up to 1.5x’ uplift for the new part over GTX 1060 despite only a 20 percent increase in CUDA Cores and very similar clock speeds. That said, all three factors are workload-dependent variables rather than raw throughput gains, so exact performance differences between GTX 1060 and GTX 1160 Ti will vary depending on how well a game can leverage the new architecture and shading techniques.

When Turing launched, Nvidia boasted about its larger, faster L2 cache. For every 32-bit memory controller and eight ROPs, Turing RTX cards have 512KB of L2 cache, but for GTX 1660 Ti, we’re back to Pascal levels of 256KB per controller/ROP slice for a total of 1.5MB – the same as GTX 1060. We are unclear what the reasoning is here, and Nvidia also hasn’t revealed whether the L2 cache here is faster compared to Pascal or not, only stating that it is ‘closer in config’ to GTX 1060’s L2 cache, but L2 cache is certainly costly in terms of die size, and Nvidia reckons this wasn’t worth the potential performance uplift/power savings for the target market.

The ROP count of 48 is the same as both GTX 1060 and RTX 2060 and so is the 192-bit memory interface. A move from GDDR5 in GTX 1060 (or GDDR5X in the refresh) to GDDR6 sees the memory clock speed go up by 50 percent, delivering an equivalent increase in memory bandwidth. This is the first Turing card we’ve seen to use slower 12Gbps GDDR6 as opposed to the 14Gbps GDDR6 used thus far, though. Again, the reasoning is to do with keeping costs down and not really “needing” the extra bandwidth of 14Gbps. It also leaves more room for a faster memory refresh further down the line, especially as the memory controllers are the same as those in RTX parts.

The GTX 1660 Ti inherits the latest Nvidia encoder (NVENC) and decoder (NVDEC) units, bringing about the various benefits outlined here.

Power savings associated with the 12nm process, GDDR6, and the more efficient core/cache structure allow Nvidia to hit the same TDP as GTX 1060 of 120W in spite of the predicted performance gains.

GTX 1660 Ti is hard launch with stock expected immediately in the usual places. There is no reference or Founders Edition design, although Nvidia says it does require an eight-pin PCIe power connection. Board partners can also pick between different combinations of DVI, DisplayPort 1.4a, HDMI 2.0b, and even USB-C VirtualLink, as all are supported. G-Sync and VESA Adaptive Sync (i.e. FreeSync) are also supported over the DisplayPort connection. SLI, however, is not supported.

As the card that outright replaces the GTX 1060 6GB in Nvidia’s lineup, the GTX 1660 Ti is said to provide up to 1.5x the performance of the outgoing part and approximately 3x the performance of a GTX 960, which Nvidia says almost two thirds of its own install base is still using. It’s also banking on this being the go-to card for competitive gamers looking for a 120fps+ 1080p experience in popular multiplayer titles.

Let’s take a quick look at the Palit StormX implementation of GTX 1660 Ti before heading into the performance results.

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.