Fujitsu claims 10,000-fold speed boost for new architecture

October 20, 2016 | 19:15

Companies: #fujitsu

Fujitsu Laboratories and the University of Toronto have announced the development of a novel computing architecture which, the pair claim, can boost the performance of certain specialised tasks by several orders of magnitude.

Fujitsu's new architecture isn't something you're likely to see on your desktop any time soon: the company, alongside researcher partner the University of Toronto, is concentrating on a specific class of problems dubbed 'combinatorial optimisation problems.' Put simply, these problems represent policy decisions made when time is a critical element: disaster recovery scenarios, investment portfolios, and even government economic policy. The act of considering and evaluating numerous independent elements then finding out the best possible combination is a tricky problem for traditional computers, and one of the areas in which quantum computing could assist.

Fujitsu's architecture, though, isn't a quantum computer. Instead, it's based on existing semiconductor technology - its prototype deice is built around off-the-shelf field-programmable gate arrays (FPGAs) - meaning it should, in theory, be easier to bring to market. The results, too, are impressive: a test system based around a basic optimisation circuit, the minimum constituent element of the architecture, showed a performance increase of 10,000 times compared with traditional processors.

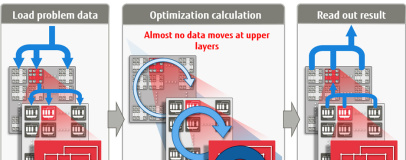

The secret, the company claims, is twofold: the architecture itself is based on multiple optimisation circuits driven in parallel and arranged in a hierarchical structure designed to minimise the amount of data that needs to be moved between modes; an acceleration system, meanwhile, calculates scores for each tested element in parallel, speeding the discovery of the problem's next state.

The company's current system runs on 1,024-bit problems; by 2018, Fujitsu aims to have a prototype capable of analysing problems between 100,000 bits and 1,000,000 bits in size. More information is available from the company's press release.

Fujitsu's new architecture isn't something you're likely to see on your desktop any time soon: the company, alongside researcher partner the University of Toronto, is concentrating on a specific class of problems dubbed 'combinatorial optimisation problems.' Put simply, these problems represent policy decisions made when time is a critical element: disaster recovery scenarios, investment portfolios, and even government economic policy. The act of considering and evaluating numerous independent elements then finding out the best possible combination is a tricky problem for traditional computers, and one of the areas in which quantum computing could assist.

Fujitsu's architecture, though, isn't a quantum computer. Instead, it's based on existing semiconductor technology - its prototype deice is built around off-the-shelf field-programmable gate arrays (FPGAs) - meaning it should, in theory, be easier to bring to market. The results, too, are impressive: a test system based around a basic optimisation circuit, the minimum constituent element of the architecture, showed a performance increase of 10,000 times compared with traditional processors.

The secret, the company claims, is twofold: the architecture itself is based on multiple optimisation circuits driven in parallel and arranged in a hierarchical structure designed to minimise the amount of data that needs to be moved between modes; an acceleration system, meanwhile, calculates scores for each tested element in parallel, speeding the discovery of the problem's next state.

The company's current system runs on 1,024-bit problems; by 2018, Fujitsu aims to have a prototype capable of analysing problems between 100,000 bits and 1,000,000 bits in size. More information is available from the company's press release.

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.