Investigating SATA 6Gbps Performance

November 16, 2009 | 10:00

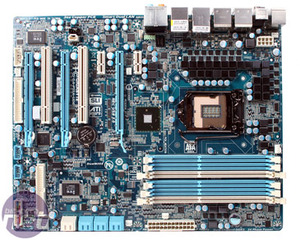

Gigabyte GA-P55A-UD6

Gigabyte's latest highest of the high end UD6 is the "A" variant of its P55 range that's bundled along with a new "333" marketing initiative (that's half the beast, no doubt). 333 stands for USB 3.0, SATA 3.0 (that's a failure according to SATA-IO spec right there) and 3x the USB power provision (i.e. it's no longer limited to just 500mA).The P55A-UD6 still has the excessive 24-phase power on the CPU, as well as more useful features like POST LED readout, six DIMM slots (although it's still only dual channel), plenty of PCI and PCI-Express peripheral connectivity, dual BIOS, 2oz copper PCB, an upgraded LOTES LGA1156 socket and its trademark blue pastel design. However, the ten SATA ports have been chopped down to just eight - those extra two (over the standard six) however, are the upgrade to SATA 6Gbps.

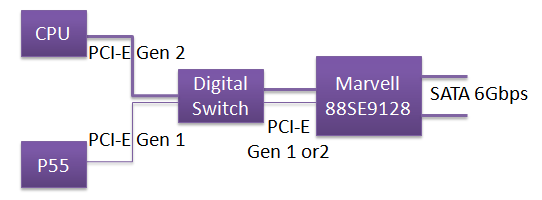

Without a PLX chipset, Gigabyte appears to have plumbed the Marvell SATA 6Gbps ASIC straight into the P55 chipset, effectively bottlenecking them at PCI-Express x1 Gen 1 bandwidth. This is not the case though, as Gigabyte can select whether to use P55 PCI-Express Gen 1 or CPU PCI-Express Gen 2 using a digital switch and some convoluted but clever tricks.

In the BIOS there is a specific setting for the Marvell chip. If it's set to Auto and a SATA 6Gbps hard drive is attached to the Marvell chip, it will operate at PCI-Express Gen 2.0 bandwidth and drop the graphics card to PCI-Express x8. However, if a SATA 3Gbps hard drive is attached to the Marvell chip, it will operate at PCI-Express Gen 1 bandwidth and the graphics card will operate at the full PCI-Express x16. This doesn't include hot plugging drives in and out though - the PC must be rebooted to change the PCI-Express lane switch. The same goes for Gigabyte's USB 3.0 support as well.

If the BIOS is set to "Turbo SATA 3.0", then the PCI-Express connection is locked to CPU PCI-Express Gen 2 bandwidth, regardless of what hard drive is attached. It's a clever and cheaper way of working around the bandwidth problem, but it does diminish the available PCI-Express lanes to the graphics card, which we've already shown does affect the performance to some degree.

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.