More GeForce GTX 280

We briefly mentioned power draw on the previous page – Nvidia says that in the worst case, the GeForce GTX 280 reference card will draw around 236W of board power.As a result, the card is suitably equipped with both six and eight-pin power connectors; both of these need to be connected for the card to work. Unfortunately, you can’t connect a pair of six-pin supplementary power adapters and expect the card to function correctly.

In fact, when you do, your display will not initialise as the card can only draw a maximum of 75W through the PCI-Express interconnect – this is in order to maintain backwards compatibility with older PCIe 1.1 motherboards.

Moving around to the PCI bracket, there is a pair of dual-link DVI-I outputs, each with the required crypto-ROM keys to drive HD video streams at resolutions up to 2,560 x 1,600. Both of these connections also support HDMI via a DVI-to-HDMI dongle and, because Nvidia has included a S/PDIF connector next to the two power sockets, audio can be carried across the HDMI connection as well.

There is also a seven-pin analogue video-out connector that supports S-Video natively, and can also support composite and component connections via a break-out dongle. In terms of audio format support over HDMI, Nvidia tells us that it supports that it supports two-channel LPCM at up to 192KHz, six-channel Dolby Digital (AC3) at up to 48KHz and DTS 5.1 at up to 96KHz. It's a shame that there isn't support for eight-channel LPCM, Dolby TrueHD or DTS HD-MA bitstreaming.

And for those of you wanting to playback HD video, you'll be pleased to know that there's acceleration included the latest PureVideo technology in GT200, which means video encoded in H.264, VC-1 and MPEG-2 are accelerated. Like the GeForce 9-series, there's also support for image post processing, dynamic contrast enhancement, and blue, green and skin tone enhancements too.

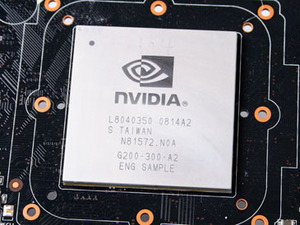

Looking under the heatsink, it's interesting to see that Nvidia has opted to move back to a separate display output controller. This is very similar to the one included on the original G80-based graphics cards, but there are some additional capabilities included in the new revision – the most notable being support for 10-bit-per-colour outputs.

Sadly, there's no direct support for DisplayPort, although Nvidia says that the GPU can support it via a custom add-in card solution. What's disappointing here is that, generally speaking, Nvidia's partners aren't allowed to move away from the reference design on high-end cards because Nvidia typically sells them a kit with the GPU already soldered onto the PCB.

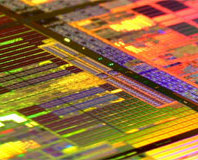

Moving away on from that, we spent some time talking with Nvidia's Tony Tamasi about the die size, as it's hidden underneath a giant freakin' heatspreader. Sadly, Tamasi wasn't keen to delve into the exact size of the die so, to put things into perspective, the package is bigger than G80's enclosure. Tamasi said that the die size was between 500mm² and 600mm² and from looking at pictures of GT200 wafers, it looks as if Nvidia can fit around 90-100 dies on a 300mm wafer – that's not a lot by any stretch of the imagination. And although Nvidia didn't show off a GT200 wafer during my time in Santa Clara, the number of dies is significantly less than the number of G80 dies you could fit on the same 300mm wafer.

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.