Intel has used part of its Architecture Day to announce the next generation of its integrated graphics architecture, Gen11, which will deliver 1 TFLOP of performance for the first time in 2019, and has reaffirmed its commitment to deliver new discrete GPUs in 2020 using the newly announced Xe architecture.

Boasting that roughly 200 million new PCs are sold every year featuring Intel’s integrated graphics, Raja Koduri, senior vice president of Intel architecture and graphics solutions (and formerly of AMD), claims that the aim of of the Gen11 iGPU is to make many more games playable on just such PCs without the need for a discrete GPU.

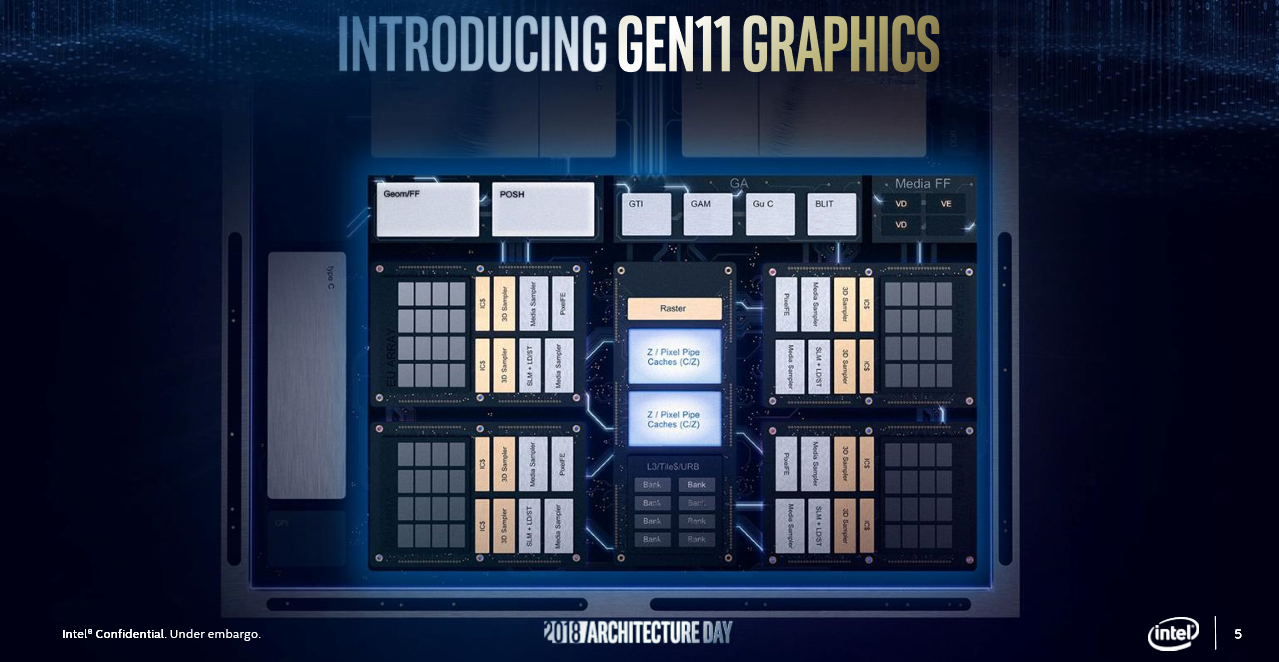

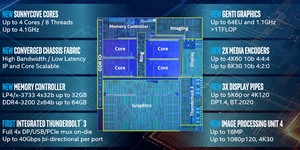

The Gen11 GPU will find its way into Intel’s 10nm-based CPUs at some point in 2019, and it will offer twice the performance per clock over the previous offering, Gen9; Gen10 has been skipped in the nomenclature, which may be a further indication that Cannon Lake CPUs will never emerge in mass production. The Gen11 GPU will also hit over 1 TFLOP of total compute performance – a first for an Intel mobile GPU. That performance boost is perhaps unsurprising when you learn that in its standard configuration (known as the GT2 tier), Gen11 will feature 64 Execution Units, which is far more than the 24 found in the current offerings based on the Gen9 (e.g. Intel HD Graphics 520 in Skylake CPUs) and ‘Gen9.5’ (e.g. Intel HD Graphics 620 in Kaby Lake and Coffee Lake CPUs) microarchitectures. Other improvements include a much greater L3 cache (3MB, which is about 4x more) and improved lossless compression algorithms.

Coarse Pixel Shading (CPS) introduces the ability to alter the pixel shading rate in a game in real-time based on a variety of different control factors. It’s similar to what Nvidia does in its Turing architecture with Variable Rate Shading. Applications will have different options for controlling it, with Intel giving examples of level of detail on a per-object basis and foveated, where centre-focused games like VR, racing, and FPS would have a higher pixel shading rate in the centre of the image.

Confirmed features in the Gen11 GPU include upgraded encode/decode capabilities, with support expected for 4K video streams and 8K content creation in ‘constrained power envelopes’. More importantly for gamers, it will also finally see Intel implement ‘Intel Adaptive Sync’ by tapping into the VESA Adaptive Sync standard which AMD also leverages with FreeSync. HDR will be supported, and there will be native HDR tone mapping too.

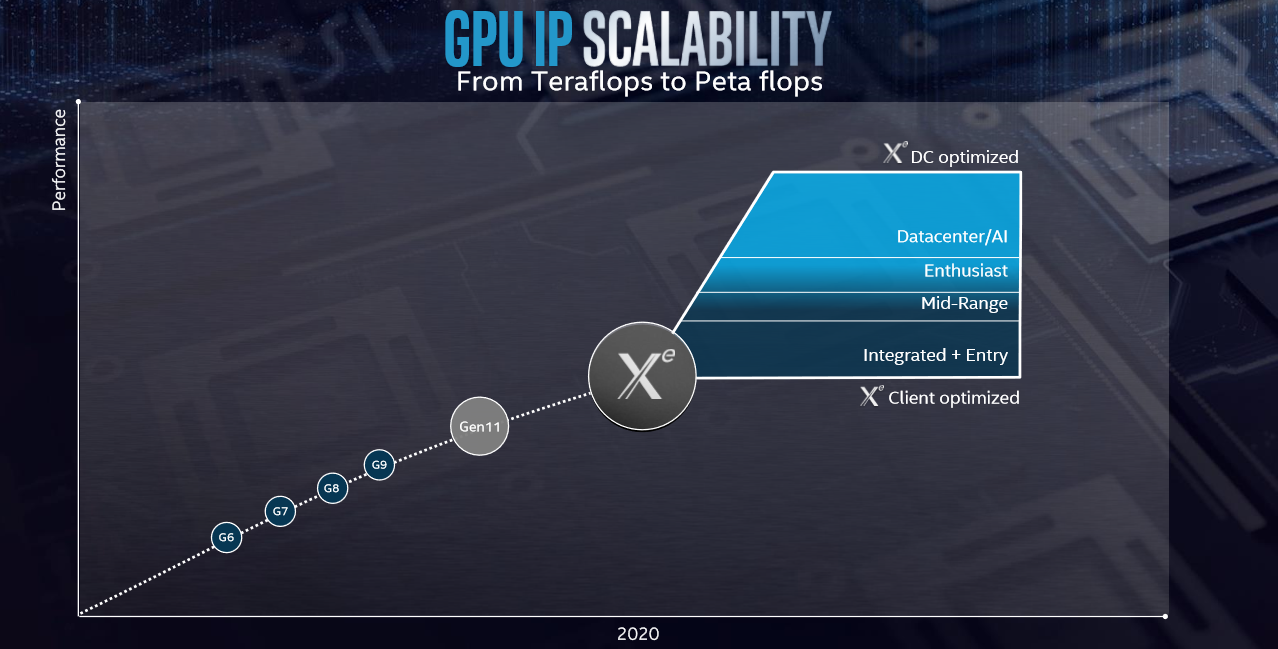

Of course, the question on most people’s lips when it comes to Intel and graphics relates to its plans to introduce discrete GPUs for both client and data centre use and thus to compete directly with Nvidia and AMD. On this front, Intel sadly had little to announce beyond a name for the upcoming architecture that will deliver these products: Xe (stylised as Xe). Promising that it will scale from teraflops (client i.e. home users, creators, gamers) to petaflops (data centre) of performance levels, and also confirming that it will supplant Gen11 as an integrated graphics solution, Intel is expecting to deliver Xe-based GPUs in 2020.

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.