Researchers boost CPU performance with Jenga cache system

July 10, 2017 | 12:20

Companies: #massachusetts-institute-of-technology #mit #research #researchers

Researchers at the Massachusetts Institute of Technology (MIT) have announced a novel form of cache for future processors, which it is claimed can boost performance by up to 30 percent and drop energy usage up to 85 percent on many-core chips.

Modern processors are extremely fast, to the point where pulling data from system memory represents a severe bottleneck. To address this, they include small amounts of extremely high-speed memory which can temporarily store - or cache - data for processing purposes. Cache memory itself isn't new, though its origins in modern computing are perhaps not as old as you may think: Intel didn't add support for external cache memory management to the x86 processor family until the i386SL, a variant of the 80386 designed for laptop computing which supported between 16KB and 64KB of optional external cache, and it wasn't until the 80486 launched in 1989 that internal cache was an option.

Since then, cache memory has been a standard feature of x86 processors in ever-increasing amounts typically tiered with small amounts of ultra-high-speed memory giving way to larger amounts of still-pretty-high-speed memory in multiple levels. It's here that researchers at MIT's Computer Science and Artificial Intelligence Laboratory (CSAIL) claim to have made a breakthrough: a new form of caching which can boost both power efficiency and raw performance considerably by reallocating cache memory according to the needs of particular applications.

'What you would like is to take these distributed physical memory resources and build application-specific hierarchies that maximise the performance for your particular application,' explained Daniel Sanchez, assistant professor in the Department of Electrical Engineering and Computer Science (EECS) at MIT and member of the group responsible for the new system. 'That depends on many things in the application. What’s the size of the data it accesses? Does it have hierarchical reuse, so that it would benefit from a hierarchy of progressively larger memories? Or is it scanning through a data structure, so we’d be better off having a single but very large level? How often does it access data? How much would its performance suffer if we just let data drop to main memory? There are all these different tradeoffs.'

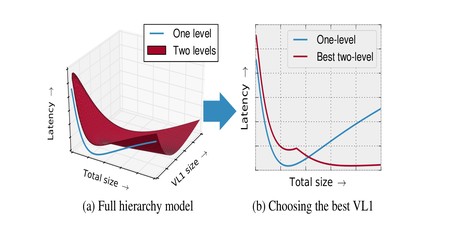

Dubbed Jenga, and based on a previous platform called Jigsaw, the new system builds a three-dimensional model of the tradeoff between latency and memory capacity which it then uses to find the best possible way to allocate the cache taking into account the physical distance between the particular core of the processor on which a thread is running and the physical memory chips themselves. The results are undeniably impressive: Running on a simulated 36-core processor, Jenga boosted processing performance by between 20 and 30 percent while dropping energy consumption by between 30 and a whopping 85 percent.

'There’s been a lot of work over the years on the right way to design a cache hierarchy,' said David Wood, a professor of computer science at the University of Wisconsin at Madison, in support of the team's work. 'There have been a number of previous schemes that tried to do some kind of dynamic creation of the hierarchy. Jenga is different in that it really uses the software to try to characterise what the workload is and then do an optimal allocation of the resources between the competing processes, and that, I think, is fundamentally more powerful than what people have been doing before. That’s why I think it’s really interesting.'

The team's paper, Jenga: Software-Defined Cache Hierarchies, is available from MIT now (PDF warning).

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.